Modeling External Business Logic in Data Vault: APIs, Scripts, and Source System Thinking

A question that comes up regularly in Data Vault training is how to handle external business logic — specifically, what happens when your data pipeline includes a call to an external API or service that returns enriched or cleansed data. Where does that fit in the model? How do you capture the response? And how do you integrate an external script cleanly into your enterprise data platform? This post walks through a concrete example: address cleansing via an external REST API.

In this article:

- Modeling External Business Logic: The Full Flow

- The Business Vault Prepares the API Call

- Treat the External Service as a Source System

- Handling JSON Responses: Two Practical Options

- Integrating the External Script: Dependencies and Interface Marts

- Combining Two Sources in the Business Vault

- A Pattern Worth Generalizing

- Watch the Video

Modeling External Business Logic: The Full Flow

The scenario starts simply enough. You have CRM data — let’s say customer records with addresses — that gets staged and broken down into the Raw Data Vault in the usual way: Hubs for business concepts, Satellites for descriptive attributes. The raw address from the CRM system lands in a Satellite.

Now comes the complication. You need to cleanse and standardize those addresses using an external REST API. A Python script handles the call: it pulls data from the platform, formats it into the required input — perhaps a single string or a calculated key — and sends it to the external service. The service returns a JSON response with the standardized address and additional metadata.

This flow touches several layers of the Data Vault architecture, and each layer has a distinct role.

The Business Vault Prepares the API Call

Before the external call can be made, the Business Vault does preparatory work. If the REST API requires the address in a specific format or needs a calculated key, that computation belongs in the Business Vault — it’s business logic, applied to raw data, to produce the input for an external process.

The external Python script then queries this prepared data — either directly from the Business Vault or via an Interface Mart (more on that below) — and performs the REST call. The script itself may be under version control and within your organization’s control. The external service is not.

Treat the External Service as a Source System

This is the key modeling decision: because the external API is outside your control, you treat its responses exactly as you would treat any other source system. You don’t trust it implicitly. You stage its output and break it into the Raw Data Vault.

If your Raw Data Vault already has an Address Hub from the CRM dataset, and the external service returns identifiers that qualify as business keys — unique, stable identifiers for addresses — those can be added to the Address Hub. The JSON response from the API then gets captured in a Satellite in the Raw Data Vault, associated with the appropriate Hub.

This approach gives you a clean audit trail. You know exactly what the external service returned, when it returned it, and what key was used to make the call. If the external service changes its response structure or returns unexpected data, your Raw Data Vault captures that reality as-is, and your downstream Business Vault logic handles interpretation.

Handling JSON Responses: Two Practical Options

API responses typically come back as JSON — sometimes well-structured, sometimes semi-structured with varying schemas between messages. There are two main approaches for capturing this in the Raw Data Vault, and the right choice depends on how structured the response is and how many attributes you actually need.

Option 1 — Extract what you need, keep the rest as JSON. If the JSON is relatively consistent and you only need a subset of its attributes — say, five out of fifty — extract those five into relational columns in the Satellite. Keep the full JSON (or the remaining payload) as a JSON or JSONB attribute in the same Satellite. You get fast, typed access to the attributes you use regularly, and the full document is available for future needs without requiring a reload.

Option 2 — Keep everything in JSON, extract in the Business Vault. If you’re unsure which attributes you’ll need, or if the structure varies, capture the raw JSON in the Satellite and handle extraction later in the Business Vault. Technically, extracting fields from JSON is a structural transformation — a hard rule, not a business rule — so it could sit in the Raw Data Vault. But if the extraction is straightforward and tied to specific downstream calculations, doing it in the Business Vault view is a reasonable and common practice.

In practice, the hybrid approach from Option 1 is most common: extract the attributes you know you need into relational columns, keep the JSON alongside them. When a new attribute is needed later — and it will be — you can pull it directly from the JSON in your Business Vault view using native JSON functions, without touching the Raw Data Vault or reloading any data.

Integrating the External Script: Dependencies and Interface Marts

When an external script queries your data platform — whether from the Raw Data Vault or the Business Vault — it creates a dependency. The entities that script relies on can’t be freely refactored without risking a broken integration. This is worth flagging explicitly in your metadata: mark those entities as part of the operational vault, indicating that external applications depend on them.

A cleaner long-term solution is to introduce an Interface Mart — a stable, versioned view layer that the external script queries instead of the Raw or Business Vault directly. When you refactor a Satellite or restructure a Business Vault entity, you update the Interface Mart view to maintain the same output structure. The external script sees no change. This decouples your internal model evolution from external integrations, which is especially valuable in organizations where multiple scripts and applications consume data from the platform.

Combining Two Sources in the Business Vault

At this point, you have two sources describing the same concept: the CRM system with the original, non-standardized address, and the external address standardizer with the cleansed version. Both are captured in the Raw Data Vault as separate source inputs. The Business Vault is where you bring them together.

The pre-computed key used to make the API call serves as the joining mechanism. Based on that key, you can establish a relationship — via a Link or a direct join in a Business Vault view — between the raw CRM address and the standardized version returned by the external service. The Business Vault then exposes the combined, cleansed address data to downstream consumers: reports, dashboards, or further downstream application scripts.

The exact modeling decisions at this stage depend heavily on how the CRM data is structured and what the business actually needs from the cleansed address. But the principle holds regardless: raw inputs from both the CRM and the external API live in the Raw Data Vault; the logic that combines and interprets them lives in the Business Vault.

A Pattern Worth Generalizing

Address cleansing is one example, but the same pattern applies to any external enrichment service: geocoding APIs, credit scoring services, entity resolution services, tax calculation engines. Whenever your pipeline includes a call to an external system that returns data you need to capture and use, the approach is the same — treat the response as a source, stage it, load it into the Raw Data Vault, and apply interpretation and combination logic in the Business Vault.

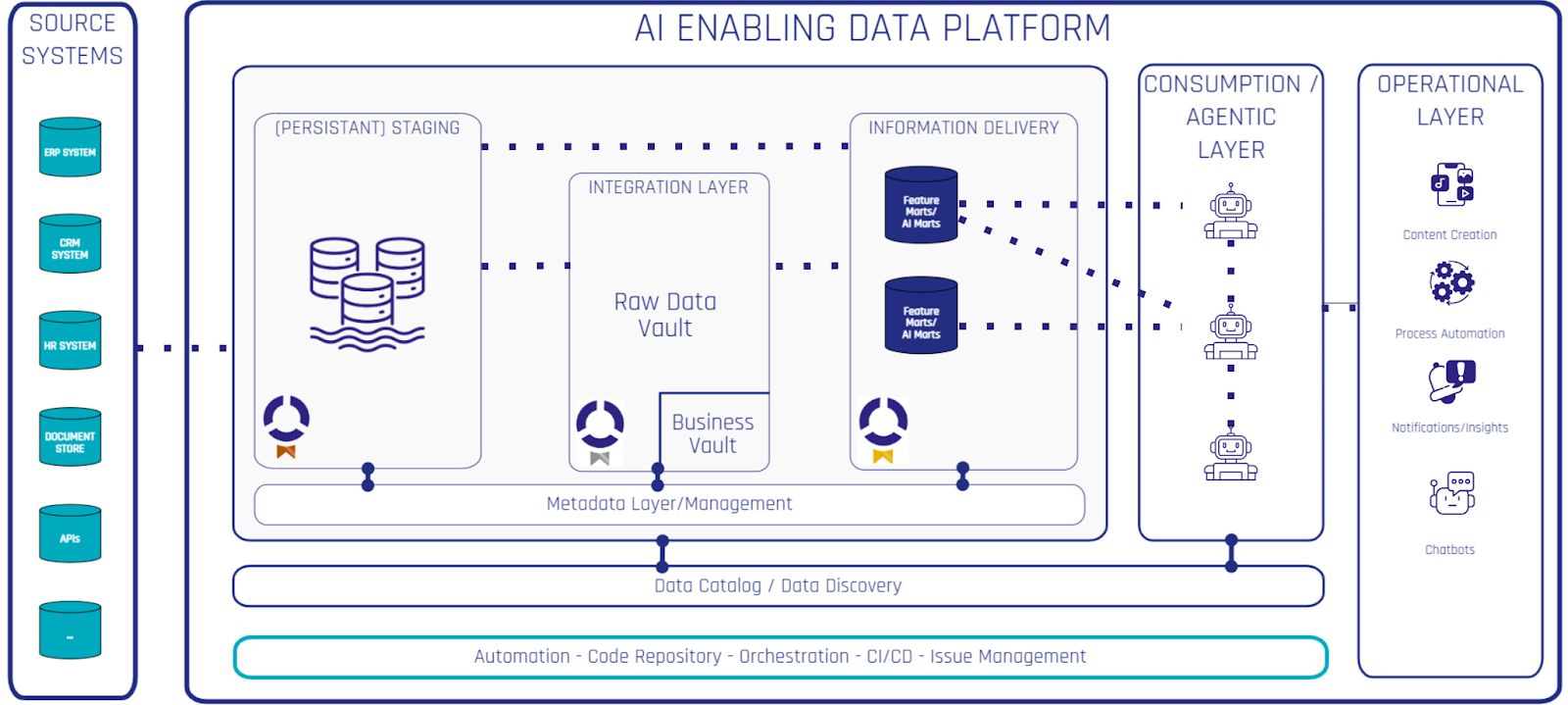

It’s also worth noting that this pattern integrates naturally into data-driven organizations where information is consumed not just through reports and dashboards but through application scripts and automated processes. The enterprise data platform becomes a hub for both analytical and operational consumers — and Data Vault’s layered architecture handles both cleanly.

To explore these patterns in depth — including Business Vault design, Interface Marts, and integrating external sources — check out our Data Vault 2.1 Training & Certification. The free Data Vault handbook is also available as a physical copy or ebook for a solid introduction to the core methodology.