Data is the fuel of the digital economy. However, its true value is realized only when it is processed quickly, reliably, and structured for analysis and reporting. Real-time data streaming enables companies to make data-driven decisions instantly. Data Vault 2.0 combined with AWS Kinesis provides a future-proof solution for efficiently processing and storing large volumes of data in modern data warehousing and BI environments.

Realtime on AWS with Data Vault 2.0

Join our webinar on March 18th, 2025, 11 am CET, and learn how to build a scalable, real-time data architecture on AWS. We’ll cover AWS infrastructure for real-time data, applying Data Vault 2.0 in real-time scenarios, and showcase a live demo with a real-world use case.

Why Real-Time Data Streaming for Data Warehousing and BI?

In today’s fast-paced business environment, timely access to accurate data is essential for making informed decisions. Traditional batch processing methods can no longer keep up with the need for real-time insights, often resulting in outdated reports and slow reaction times. Real-time data streaming solves this problem by enabling continuous data integration, allowing companies to analyze and act on fresh data as it arrives. This shift not only improves operational efficiency but also enhances overall business intelligence strategies by ensuring that the most up-to-date information is always available.

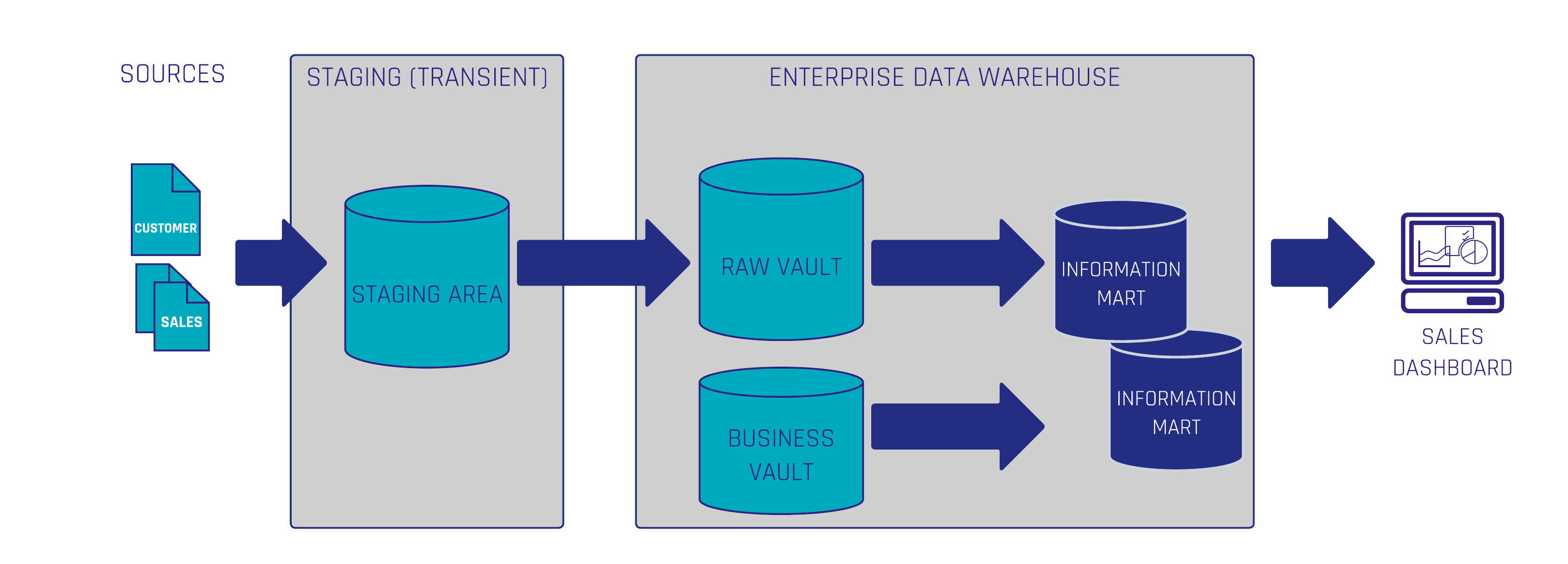

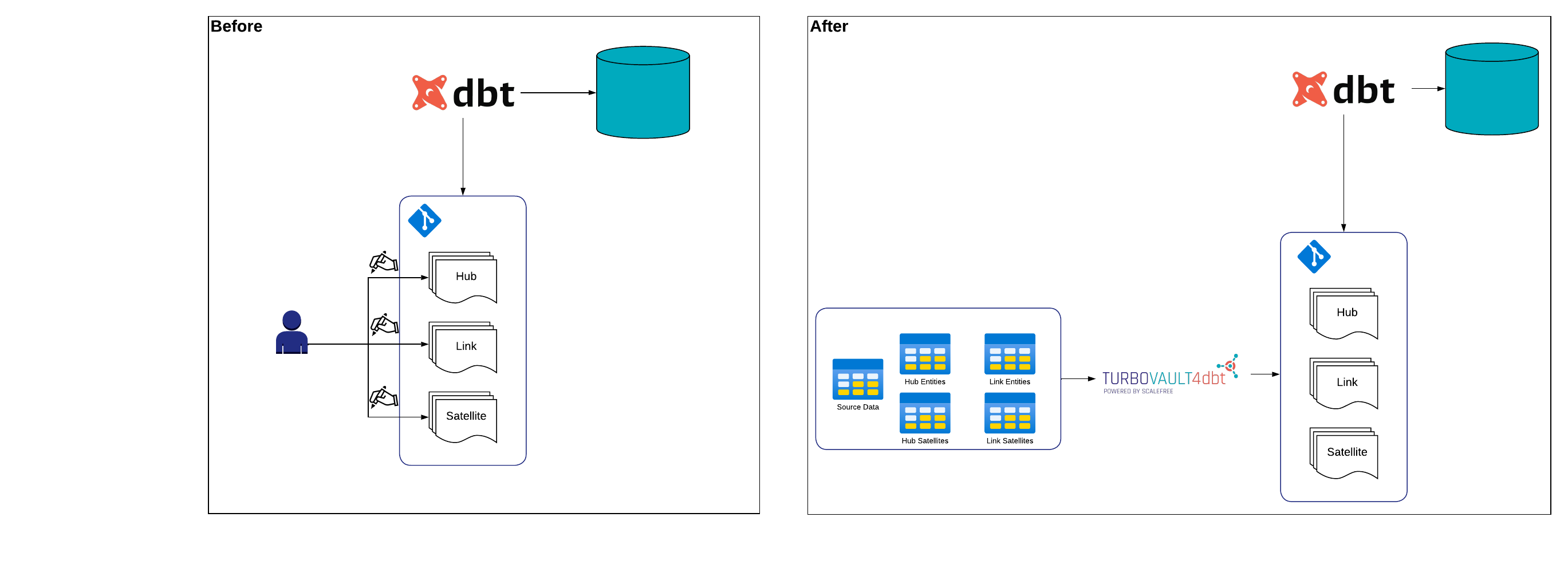

Data Vault 2.0 as the Foundation for Real-Time Data Warehousing

As organizations deal with increasing volumes of data from multiple sources, they need a flexible and scalable approach to data modeling. Data Vault 2.0 provides the ideal foundation for real-time data warehousing by offering a structured yet adaptable methodology. Unlike traditional data models, which can be rigid and difficult to modify, Data Vault 2.0 adapts to new requirements quite fast. By leveraging Data Vault 2.0, companies can build a resilient and future-proof data warehouse capable of handling real-time data streams with ease.

AWS Kinesis: Real-Time Data for Your Data Warehouse

Processing real-time data at scale requires a robust infrastructure, and AWS Kinesis is built precisely for this purpose. It enables businesses to collect, process, and analyze real-time data streams, ensuring that data warehouses remain continuously updated. By eliminating data latency, companies can generate insights in real time, leading to faster decision-making and improved operational performance. Furthermore, AWS Kinesis seamlessly integrates with widely used BI systems such as AWS Redshift and Snowflake, making it an essential component for modern data architectures. Its dynamic scaling capabilities provide cost efficiency by adjusting resource consumption based on actual demand. Additionally, Kinesis includes advanced security features, ensuring that sensitive data remains protected while adhering to industry regulations.

Conclusion: Future-Proof BI and Data Warehousing with Real-Time Streaming

Companies that embrace real-time data processing benefit from faster BI analysis, lower costs, and greater scalability. Data Vault 2.0 combined with AWS Kinesis offers a powerful, future-proof solution for modern data warehousing architectures. By enabling seamless integration of real-time data, businesses can react instantly to market changes, optimize their operations, and stay ahead of the competition.

Investing in real-time data streaming is not just about speed, it’s about building a resilient and adaptive data infrastructure that grows with your business. Organizations that leverage these technologies today will gain a significant competitive edge, ensuring long-term success in an increasingly data-driven world. Leverage real-time streaming for BI and maximize the value of your data!