Watch the Video

PIT and Bridge Tables

In this blog post, we will answer a commonly asked question regarding PIT and Bridge Tables:

In the Data Vault architecture, is it okay to use/reuse created PIT and Bridge tables in the code of the Business Vault business rules?

The short answer is yes, but let’s dive into the details to understand the rationale and how PIT (Point-In-Time) tables and Bridge tables work in the context of Business Vault entities.

In this article:

Understanding PIT and Bridge Tables

Before explaining their usage, let’s quickly clarify what PIT and Bridge tables are in the Data Vault architecture:

- PIT Tables: These provide a snapshot of data for a specific point in time. They help combine deltas and descriptive data to enable calculations or business logic that requires a specific snapshot.

- Bridge Tables: These are primarily used to resolve many-to-many relationships and improve query performance when dealing with large datasets.

Applying Business Logic in Business Vault

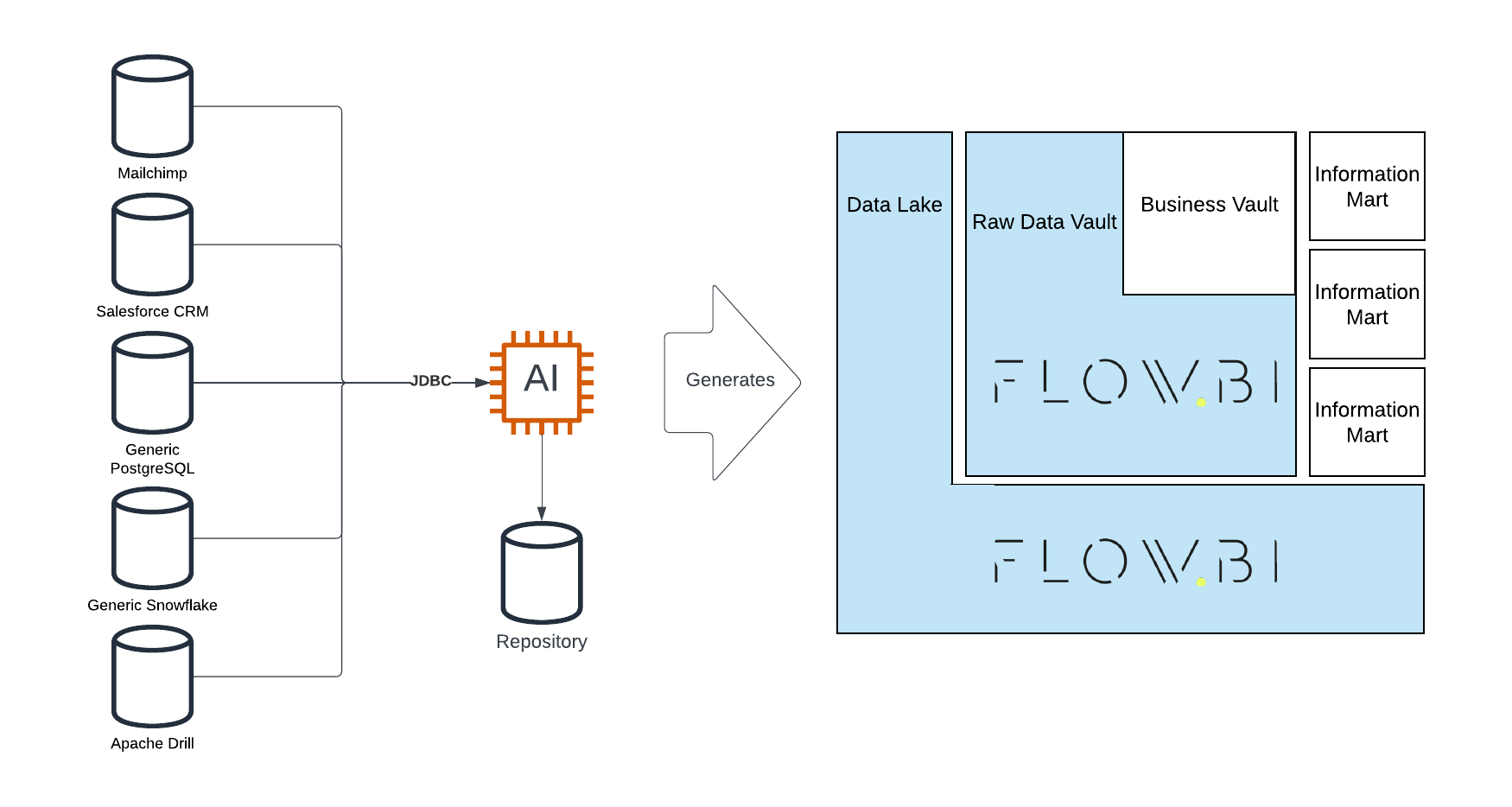

In the Data Vault, data flows from the Raw Data Vault (RDV) to the Business Vault (BV) and finally to the Information Mart (IM). The key difference lies in the granularity of data:

- Load Date: In the Raw Data Vault, data batches are identified by a load date, which represents when the data was ingested.

- Snapshot Date: In the Information Mart, data is often presented as snapshots, where each snapshot represents the data at a specific point in time.

Now, the Business Vault sits between the Raw Data Vault and Information Marts. When applying business rules in the BV, there are two major types of granularities to consider:

1. Granularity Based on Incoming Deltas

In this case, business logic is applied to all incoming deltas identified by the load date. For example, cleansing phone numbers is a typical use case where every delta (update) must be processed, even if only the latest version is needed in the end.

The resulting data is stored in a computed Satellite in the Business Vault. The primary key remains the hash key of the parent entity and the load date.

2. Granularity Based on Snapshot Date

Some business logic requires calculations for specific points in time. For example, calculating the lifetime value of a customer:

- The lifetime value increases when a customer makes a purchase.

- The lifetime value decreases incrementally if no purchases are made over time.

In this scenario, even when no new delta is coming in, the value must still be recalculated daily. This granularity aligns with the snapshot date, which is already defined in the PIT table. By leveraging the PIT table, you can calculate and store the lifetime value in a computed Satellite with a primary key of the parent hash key and snapshot date.

Reusing PIT Tables

When switching from load date (deltas) to snapshot date (snapshots), PIT tables play a crucial role:

- PIT tables help join descriptive data from Satellites to provide a snapshot-based view of the data.

- They allow business rules to be applied to outgoing information granularity (snapshot date).

For example, if you want to calculate a specific measure, such as a customer’s lifetime value, the PIT table provides the granularity needed to compute the values for every day, hour, or minute, depending on your requirements.

Reusing Bridge Tables

Bridge tables can also be reused in Business Vault entities but with one key consideration:

Avoid loading one Bridge Table from another Bridge Table.

Why? Cascading Bridge Tables can lead to sequential dependencies, which hinder parallelization. Parallel processing is essential for performance, especially in high-volume environments. To work around this limitation, use Computed Aggregate Links.

What Is a Computed Aggregate Link?

A Computed Aggregate Link is essentially a Link with pre-computed aggregations. This concept is described in the Data Vault methodology and allows you to reuse aggregations efficiently without chaining Bridge Tables together.

For example, if you want to calculate a new measure based on facts stored in a Bridge Table:

- Use the Bridge Table as the

FROMsource for a computed Satellite. - Attach the new measure to the Bridge Table as part of the Business Vault entity.

This approach avoids cascading dependencies while allowing you to extend facts or perform complex calculations.

Best Practices Recap for PIT and Bridge Tables

Here are the key takeaways for using PIT and Bridge tables in Business Vault entities:

- Yes, you can reuse PIT tables: They are commonly used to provide snapshot granularity for computed Satellites.

- Yes, you can reuse Bridge tables: Use them carefully to avoid cascading dependencies.

- Use Computed Aggregate Links: When you need to extend a Bridge Table, this is the recommended approach to maintain efficiency and parallelization.

- Granularity switch: Be mindful of the transition from load date (delta-driven) to snapshot date (snapshot-driven) when applying business logic.

Summary

In summary, PIT and Bridge tables are powerful tools in the Data Vault architecture, especially within the Business Vault. They enable complex business logic, such as snapshot-based calculations, while maintaining efficiency and performance. By adhering to best practices like avoiding cascading Bridge Table loads, you can ensure your implementation remains scalable and robust.

Meet the Speaker

Michael Olschimke

Michael has more than 15 years of experience in Information Technology. During the last eight years he has specialized in Business Intelligence topics such as OLAP, Dimensional Modelling, and Data Mining. Challenge him with your questions!