Watch the Video

Exploring Data Vault 2.0: Managing Hashing Costs in Smaller Environments

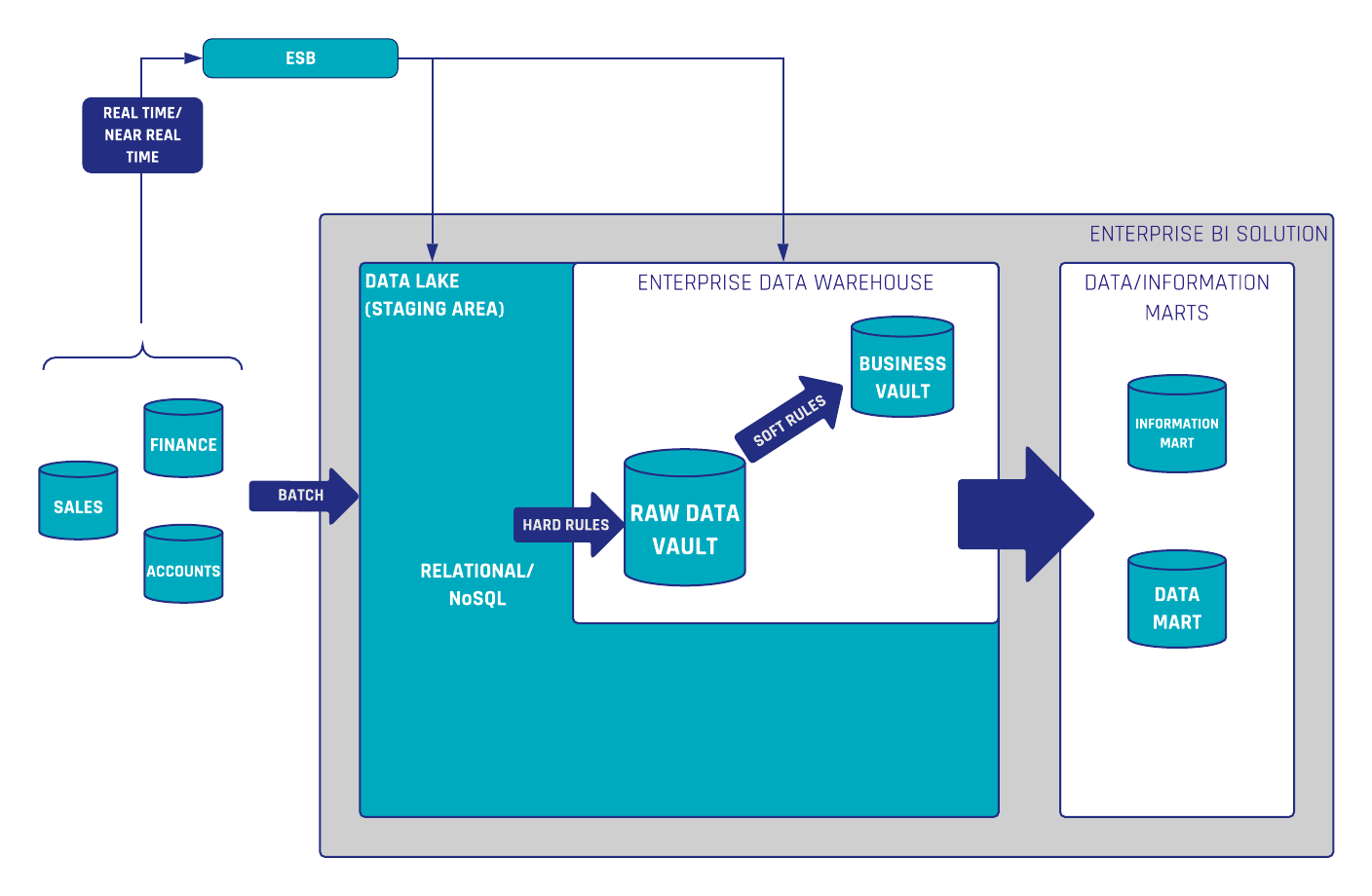

In the evolving landscape of data management, Data Vault 2.0 stands out as a robust methodology designed for scalability, flexibility, and consistency across diverse technological environments. A crucial component of Data Vault 2.0 is the use of hashing for business keys (BKs) and hash diffs. Hashing ensures data integrity and efficiency, especially in distributed systems. However, the performance costs associated with hashing can sometimes become a significant concern. This blog post delves into the nuances of hashing in Data Vault 2.0, the trade-offs involved, and when it might be feasible to deviate from the standard approach.

In this article:

The Role of Hashing in Data Vault 2.0

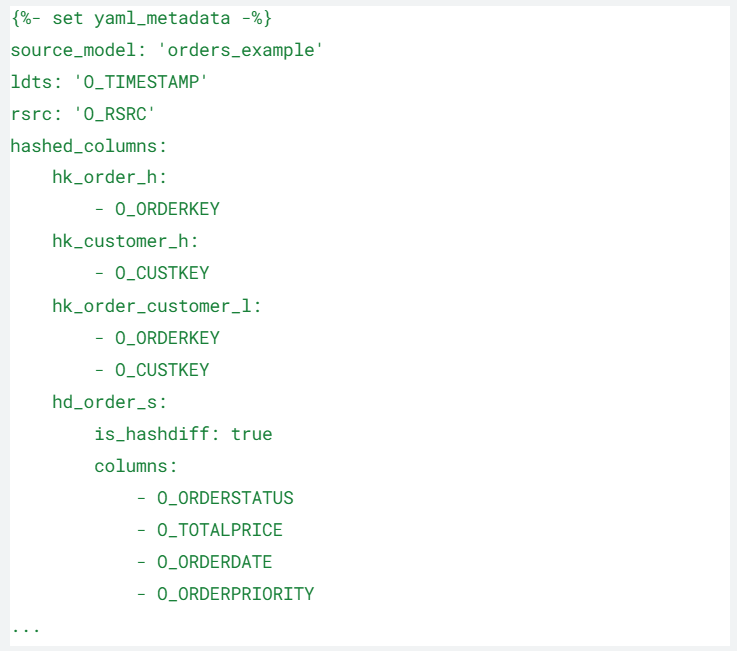

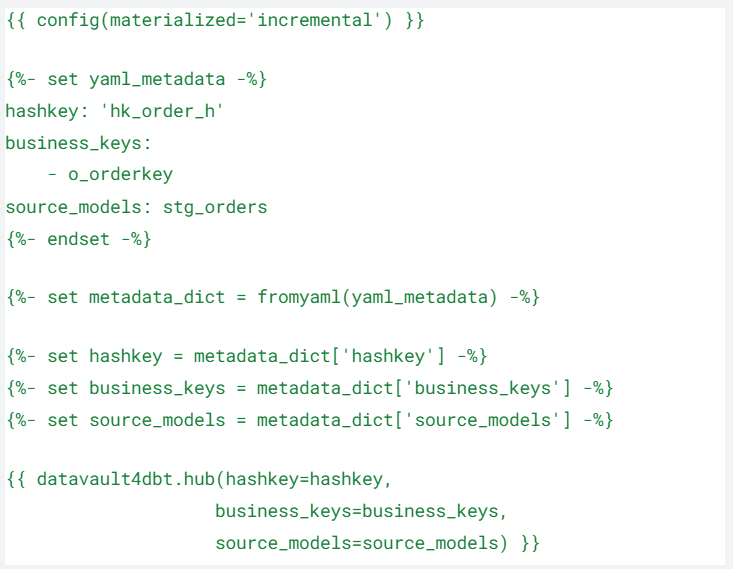

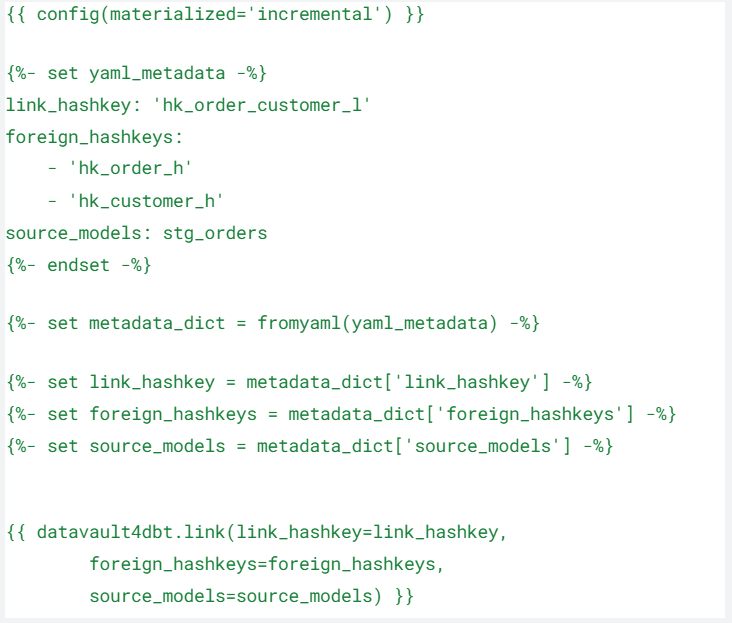

Data Vault 2.0 leverages hashing to create unique, consistent identifiers for business keys and to detect changes in data efficiently. This method is technologically agnostic, meaning it can be implemented across various databases and data platforms, whether on-premises or in the cloud. The primary advantages of hashing include:

- Consistency Across Systems: Hashing ensures that business keys are consistent and unique across different systems and regions.

- Improved Query Performance: Pre-calculating hash diffs can make query execution faster and more efficient, transferring the computational load from query time to data loading time.

- Simplified Data Integration: Hash keys provide a straightforward way to manage and integrate data from multiple sources, reducing the complexity of data joins.

Challenges of Hashing

Despite its benefits, hashing can introduce performance challenges, particularly in the following scenarios:

- Wide Tables: Calculating hash diffs for tables with a large number of columns can be computationally intensive.

- Complex Hash Functions: Ensuring that hash functions generate unique strings can be complex and resource-heavy.

- Hardware Limitations: On-premises environments with limited hardware capabilities might struggle with the additional computational load required for hashing.

Evaluating Hashing Alternatives

When faced with performance concerns, particularly in smaller, local solutions, it’s essential to consider whether deviating from the standard hashing approach would be beneficial. There are three primary options to consider:

- Hash Keys: The default and recommended option for most environments, especially those involving distributed systems or diverse technologies.

- Sequences: A legacy approach from Data Vault 1.0 that uses sequential numbers as identifiers.

- Business Keys: Using the original business keys directly as identifiers.

The Case Against Sequences

Sequences, although a viable option, are generally not recommended in modern Data Vault implementations due to several drawbacks:

- Lookup Overhead: Sequences require lookups during data loading, which can slow down the process significantly.

- Orchestration Complexity: Managing sequences adds complexity to the loading process, particularly in real-time scenarios.

- Distributed System Challenges: Sequences do not perform well in distributed environments where parts of the solution might reside in different locations (e.g., cloud and on-premises).

Hash Keys vs. Business Keys

When deciding between hash keys and business keys, the choice largely depends on the specific technology stack and the environment. Here are some considerations:

Hash Keys

- Pros: Provide a consistent, fixed-length identifier that simplifies joins and queries across various systems. They are particularly beneficial in mixed environments.

- Cons: Slightly higher computational cost during data loading compared to sequences. However, the consistent performance across queries often outweighs this drawback.

Business Keys

- Pros: Directly using business keys can simplify the architecture in environments where the data platform supports efficient handling of these keys.

- Cons: Can lead to complex and less efficient joins, especially in mixed or distributed environments.

Performance Optimization Strategies for Hashing

For environments where hashing performance is a concern, several optimization strategies can be employed:

- Leverage Hardware Acceleration: On-premises environments can benefit from hardware acceleration, such as PCIe express cards with crypto chips, to offload hash computation from the CPU.

- Utilize Optimized Libraries: Many platforms use highly optimized libraries (e.g., OpenSSL) for hash computations, which can significantly improve performance.

- Incremental Loads: Ensure that performance evaluations consider multiple load cycles to capture the benefits of hash diffs during delta checks, not just initial loads.

Future Trends and Recommendations

Looking forward, the evolution of data platforms and technologies might shift the balance towards using business keys more frequently. As Massively Parallel Processing (MPP) databases become more prevalent, their native support for efficient key management could make business keys a more attractive option. However, until such technologies are ubiquitous, the default recommendation remains to use hash keys for their broad compatibility and consistent performance.

Conclusion

Data Vault 2.0’s approach to hashing business keys and hash diffs provides significant advantages in terms of consistency, scalability, and performance. While the performance costs of hashing can be a concern, particularly in smaller environments with limited hardware, careful consideration of the available options and optimization strategies can mitigate these issues. Ultimately, the decision should be guided by the specific technological context and future-proofing considerations.

For most scenarios, hash keys remain the recommended approach due to their versatility and robustness in mixed and distributed environments. However, as technology evolves, the use of business keys might become more feasible, highlighting the importance of staying informed about the latest trends and advancements in data management.

Meet the Speaker

Michael Olschimke

Michael has more than 15 years of experience in Information Technology. During the last eight years he has specialized in Business Intelligence topics such as OLAP, Dimensional Modelling, and Data Mining. Challenge him with your questions!