Choosing the right technology stack is a critical decision when building an open source powered Enterprise Data Warehouse (EDW). The technology stack consists of various components, including databases, automation tools, DevOps, Infrastructure, and visualizations, which work together to enable efficient data management, processing, and analysis.

In this blog article, we will dive deeper into the topic of selecting the right tech stack for an open source powered EDW. We will explore different aspects to consider, such as evaluating vendors, leveraging open source products, and understanding the key components of a robust tech stack. By the end of this article, you will have a better understanding of the factors to consider when selecting the right tech stack for your EDW.

Choosing the right Tech Stack for an Open Source powered EDW

Join our webinar as our expert dives into the process of selecting the tech stack for your open-source Enterprise Data Warehouse (EDW) project. Learn more about essential considerations such as evaluating vendors, leveraging open-source products, and understanding key components like databases, automation tools, DevOps, infrastructure, and visualization. Furthermore, discover the power of combining Data Vault 2.0 with an open-source tech stack and learn how it can empower your EDW project.

Evaluating Vendors and Leveraging Open-Source Products:

When embarking on the journey of building an open-source powered EDW, it is crucial to evaluate vendors and leverage open source products effectively. By choosing reputable vendors and open source solutions, you can ensure reliability, community support, and continuous development. Evaluating vendors involves assessing their expertise, reputation, and compatibility with your project requirements. Additionally, leveraging open source products provides flexibility, cost-effectiveness, and access to a vast community of contributors and developers.

Understanding the Key Components of a Robust Tech Stack:

A robust tech stack for an open source powered EDW comprises various components that work together to enable efficient data management and analysis. Here are some key components to consider:

Choosing the appropriate database technology is vital for efficient data storage and retrieval. Options like MongoDB, PostgreSQL, MySQL, or other databases that align with your project requirements should be considered

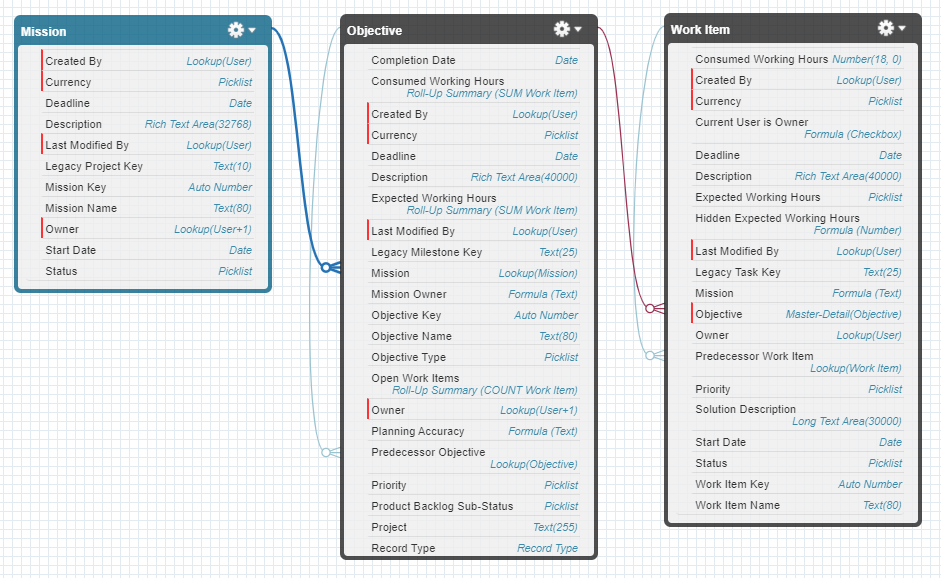

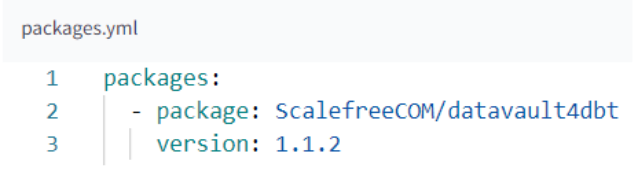

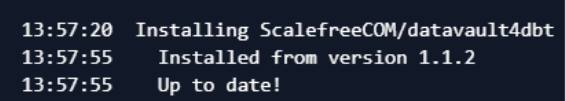

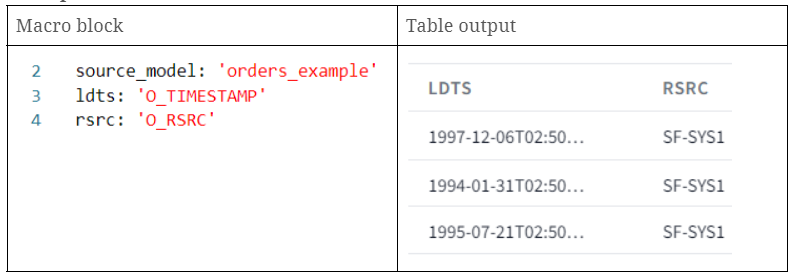

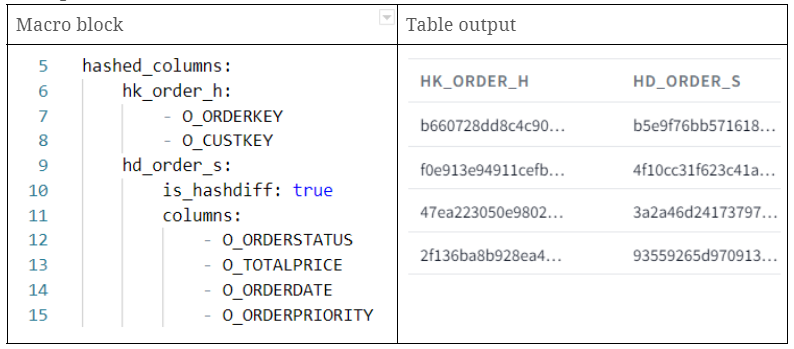

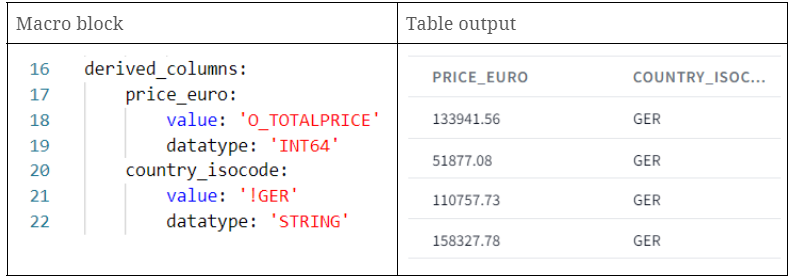

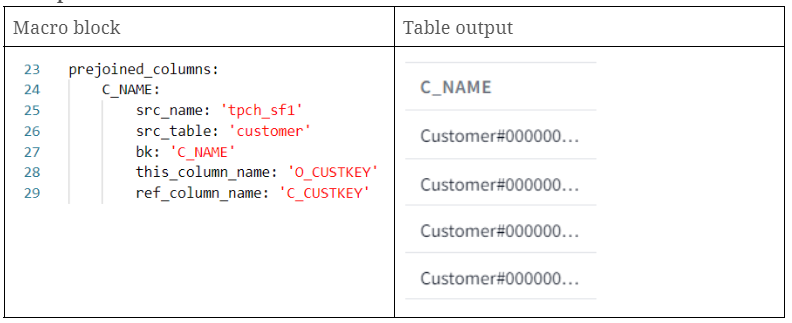

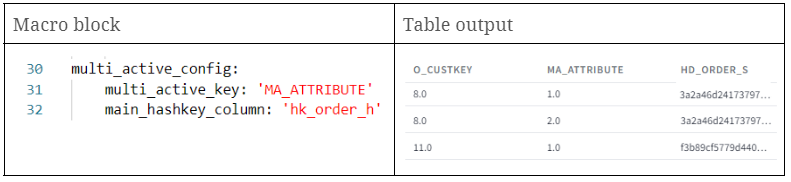

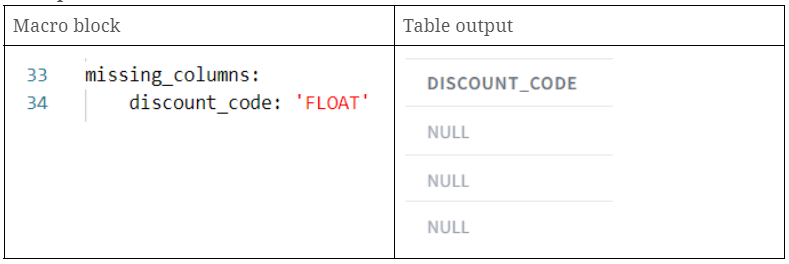

Automation tools play a crucial role in the development process of an EDW. These tools greatly accelerate the development process, particularly in a Data Vault project. One example of an open source automation tool is dbt (data build tool), which can be combined with Scalefree’s self-developed package DataVault4dbt. These tools help streamline the development process and make the development team more efficient.

DevOps and Infrastructure:

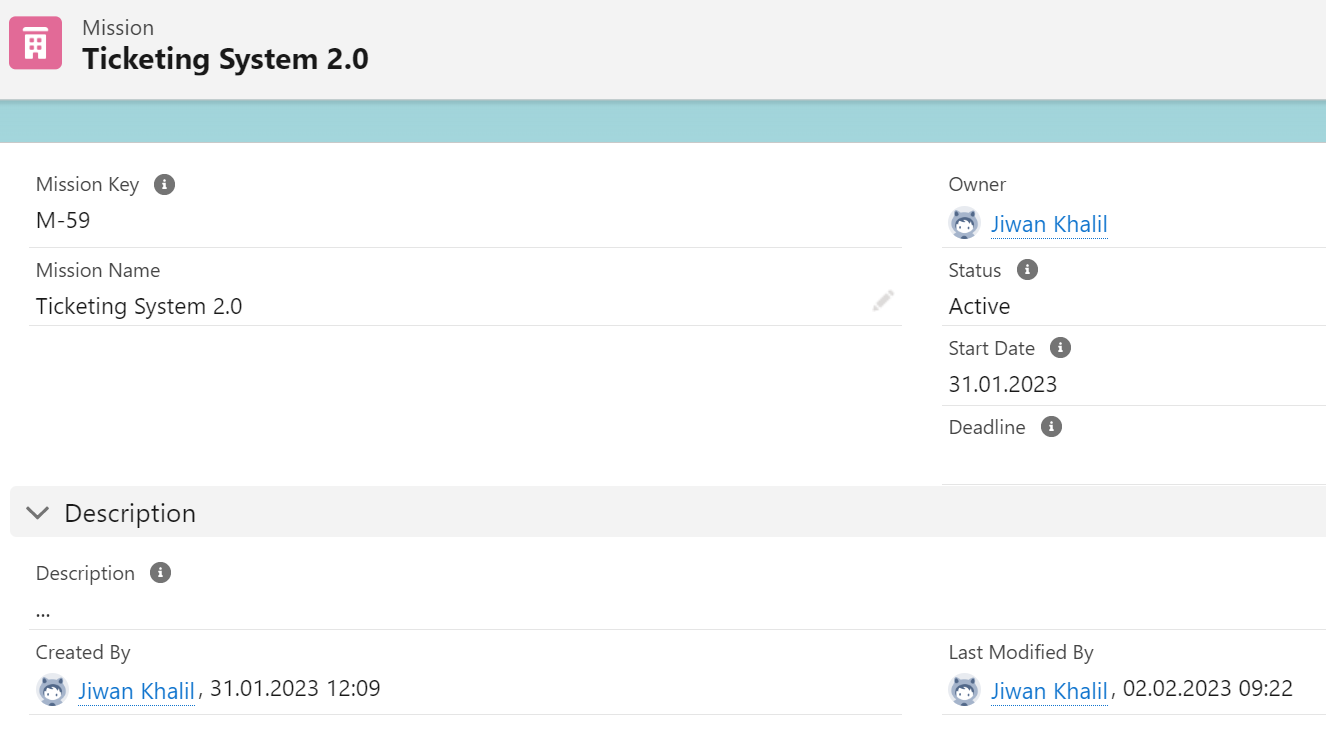

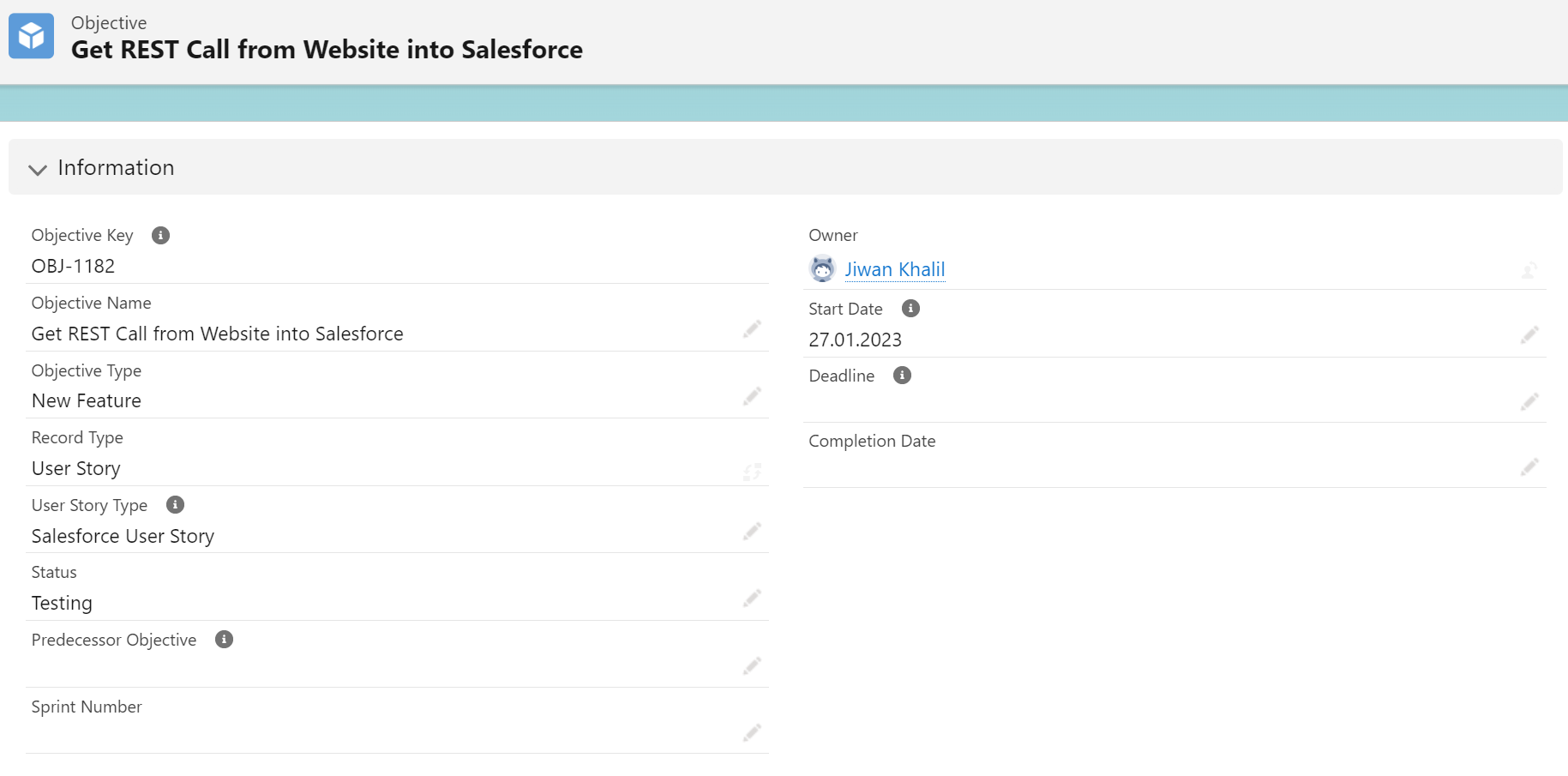

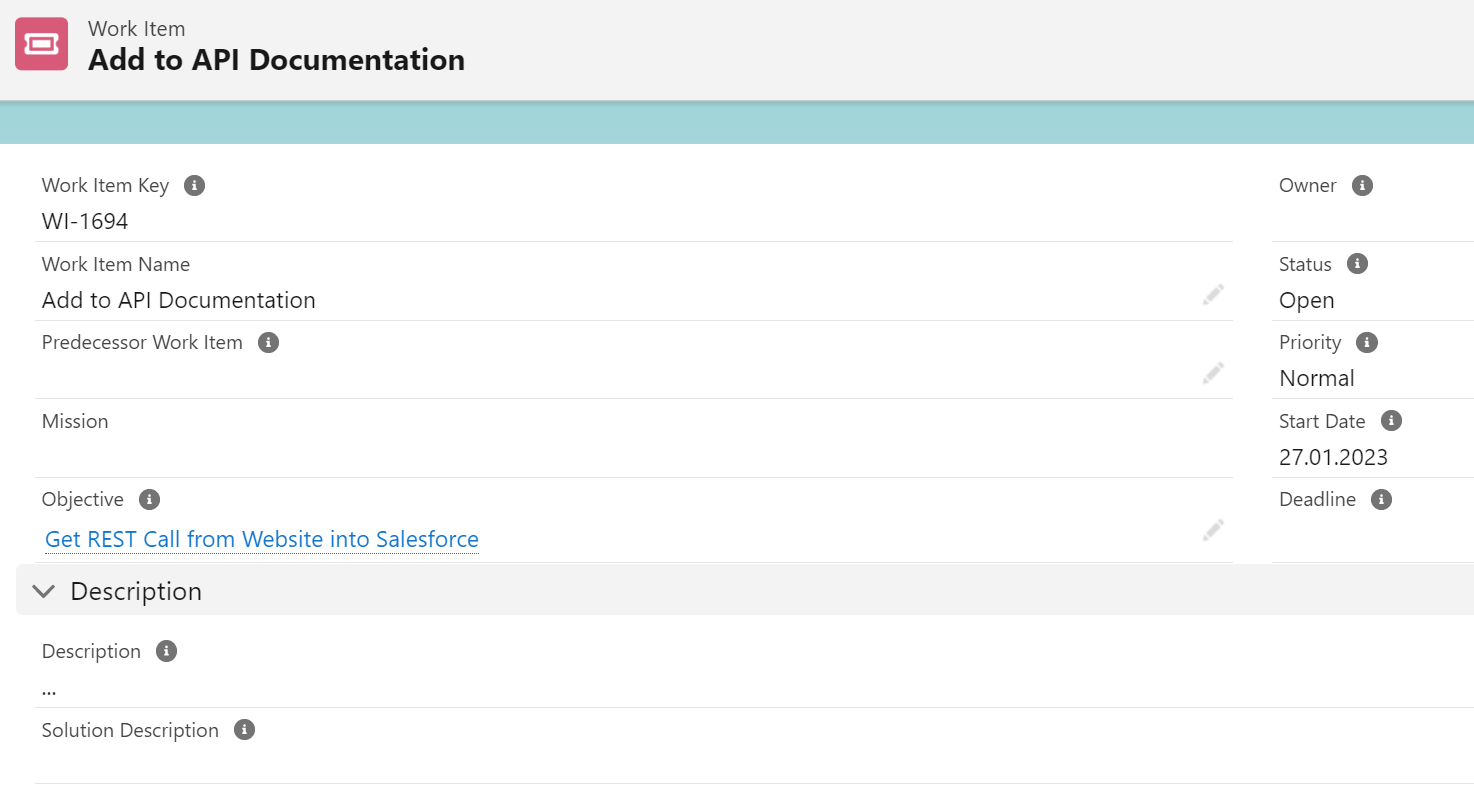

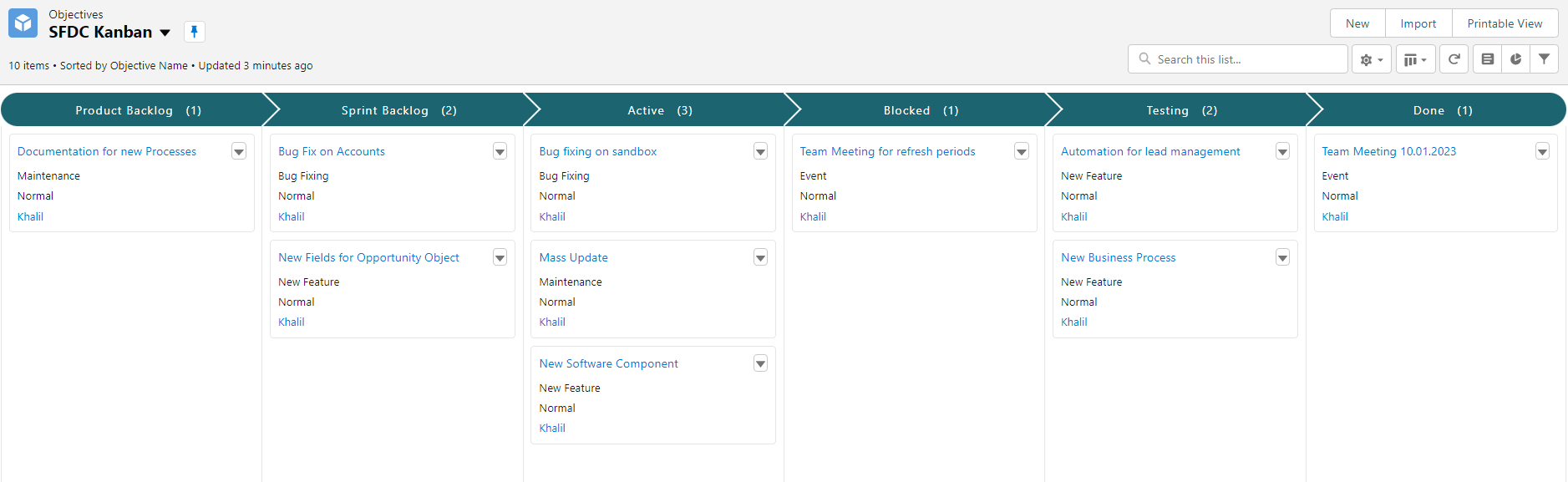

Having a stable scheduler or a similar tool to load the data regularly from the sources into the Data Warehouse is important. Options such as Airflow can be considered for this purpose. Additionally, having a DevOps tool for project management is essential. These tools help structure the work and make the development team more efficient, especially when using agile methodologies like Scrum.

Effective data visualization is crucial for analyzing and understanding the data in an EDW. There are various open source visualization tools available, such as Grafana, Superset, or Metabase, which provide powerful capabilities for creating insightful visualizations and dashboards.

Why Data Vault 2.0 is a Powerful Choice in Combination with an Open Source Tech Stack:

Combining Data Vault 2.0 with an open source tech stack offers a powerful solution for building an efficient, scalable EDW. The agile concepts used in Data Vault make it easier to gradually build an open source tech stack over time, starting with basic needs and expanding as necessary.

It should be noted that checking the readiness of an open source automation tool for Data Vault and having Data Vault templates in place is crucial. These components enhance efficiency, streamline development, and ensure smooth integration in an open source powered EDW environment.

Benefits of an Open Source Powered EDW:

Building an open source powered EDW offers several advantages. Firstly, open source solutions often provide a vast community of developers, ensuring continuous support, updates, and improvements. Secondly, open source products can be customized and tailored to meet specific project requirements. This flexibility allows you to adapt the tech stack to your organization’s needs and scale as your data processing requirements grow. Lastly, open source solutions typically offer cost-effectiveness by eliminating or reducing licensing fees, making them an attractive option for organizations of all sizes.

Considerations for Scalability and Performance:

Scalability and performance are crucial factors to consider when selecting the right tech stack for an open source powered EDW. As your data processing needs grow, it’s important to choose a tech stack that can scale horizontally or vertically to handle increasing workloads. Technologies like Kubernetes can be considered for container orchestration and load balancing to ensure efficient utilization of resources and smooth scalability. Additionally, performance optimization techniques, such as caching mechanisms, data indexing, and query optimization, should be considered to ensure fast and efficient data retrieval and processing.

Security and Data Privacy:

When dealing with enterprise data, security and data privacy are of utmost importance. Ensure that the chosen tech stack incorporates robust security measures and follows best practices for data encryption, access control, and secure communication protocols. Regular security audits and updates are essential to address any vulnerabilities and ensure compliance with data privacy regulations.

Picking the right tech stack for an open source powered EDW is a crucial step in building an efficient and scalable BI-System. By evaluating vendors, leveraging open source products, and understanding the key components of a robust tech stack, you can ensure a solid foundation for your EDW. Databases, automation tools, DevOps and Infrastructure, and visualization choices play vital roles in creating an effective and customizable solution. Embracing open source solutions provides flexibility, community support, and cost-effectiveness, making it an ideal choice for organizations seeking efficient data processing and analysis capabilities. Considerations for scalability, performance, security, and data privacy are important to ensure the success of your EDW implementation.

In conclusion, the selection of a tech stack for an open source powered EDW requires careful consideration of various factors. It is essential to evaluate vendors, leverage open source products, and understand the key components that contribute to a robust tech stack. By making informed choices and aligning the tech stack with your project objectives, you can build a scalable and efficient EDW that empowers your organization to process and analyze data effectively.

If you are interested to learn more about the topic, watch the recording here for free.

– Lorenz Kindling (Scalefree)