Modeling a Satellite in the instance of any structural changes within the source system

Over time, a source system can change. The question is how to absorb these changes into a Data Vault 2.0 data warehouse, especially when considering the satellites?

It is necessary to find a balance between the reengineering effort and performance when the source table structure changes. To better help those who find structural changes in the source system, this article will present our recommendations, based on our knowledge base, for various types of changes in a source.

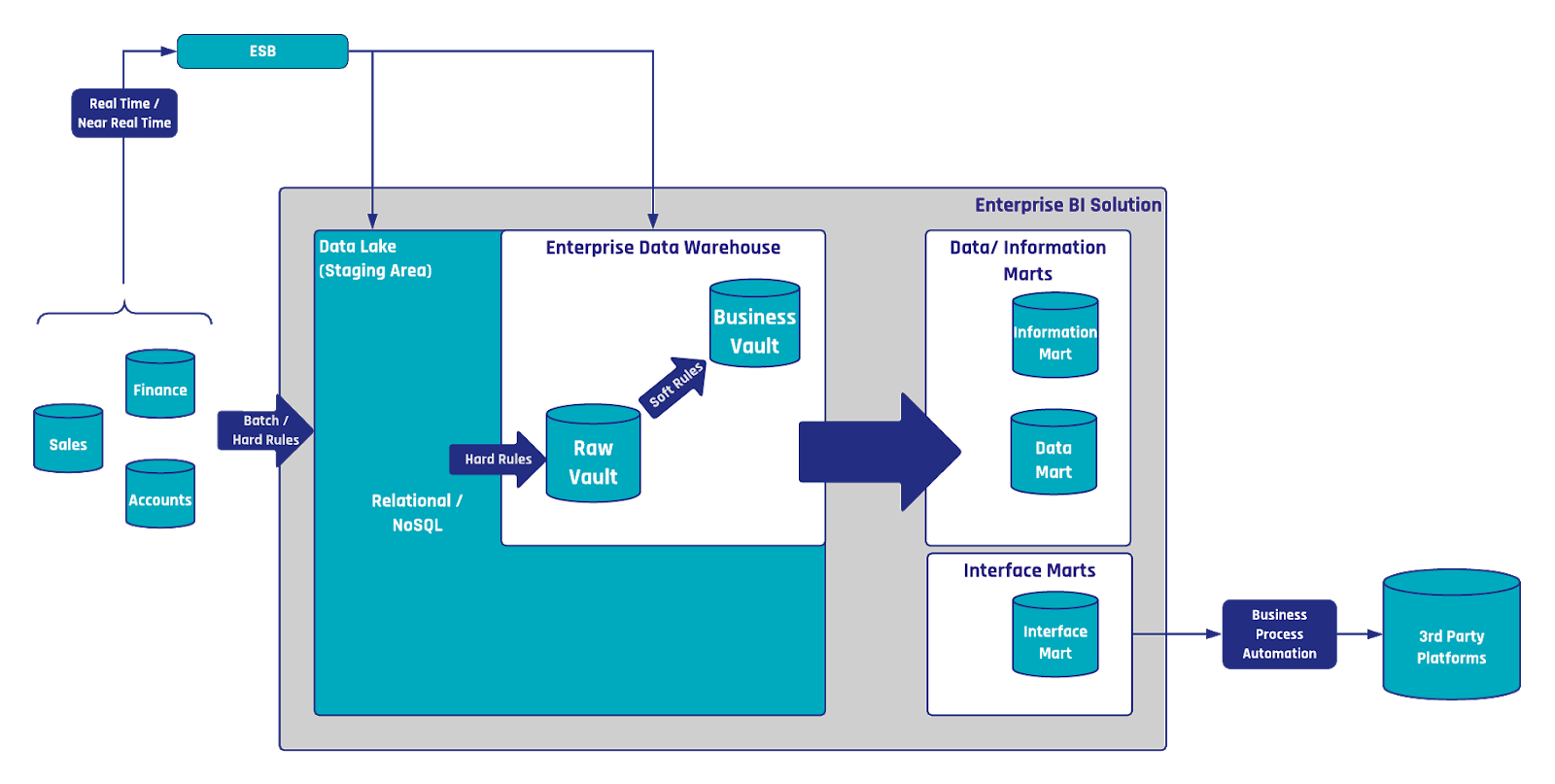

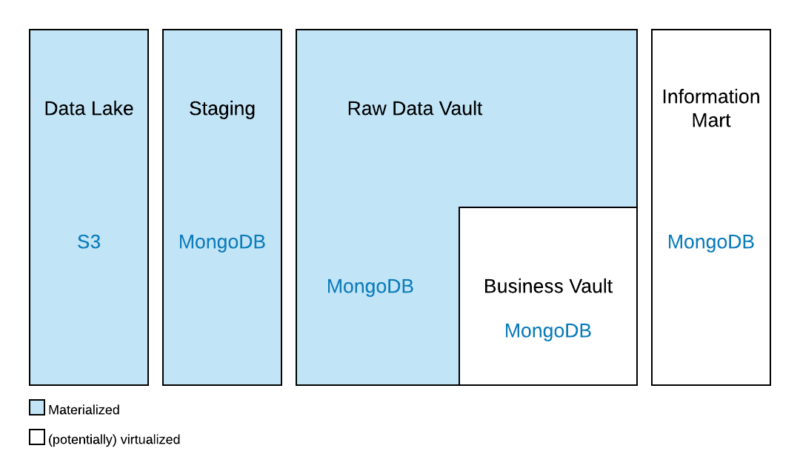

This article describes features embodied in the Data Vault 2.0 model: the foundation of a hub, link, and satellite entities can adjust to changes in the source data easily, thus reducing the cost of reengineering the enterprise data warehouse.

New columns in the source system: when any new columns or attributes are added to the source

There are two options for absorbing new attributes from the source into the data warehouse. First, the existing satellite could be modified.

This is a pragmatic approach but requires the modification of existing code.

On the other hand, it is also possible to create a new satellite for the new attribute, or attributes, without modifying the existing satellites. This has the advantage of a zero code impact but requires more joins in an Information Delivery part of the Data Vault.

The first option does not require this join as the new attribute is added to the existing satellite. The best approach is to compare the advantages and disadvantages of both options in the specific situation as it applies to your situation. Automation tools for example usually can handle the alter table statement automatically without manual coding effort but require changes be made in the database.

Removing columns in the source system from source column deletion

One option is to close the “old” Satellite, i.e. not load it further, as the ETL code is turned off, and create a new satellite which should be loaded. The same approach is used when the underlying data structures from the source are modified in a larger perspective.

Old satellites are turned off, new satellites with the new structure are then loaded.

Another option would be more meaningful if there are only minor changes needed such as the removal of one column. Then “simulating” this column with a NULL value or a value which adds meaning and makes more sense would be more helpful for auditing purposes.

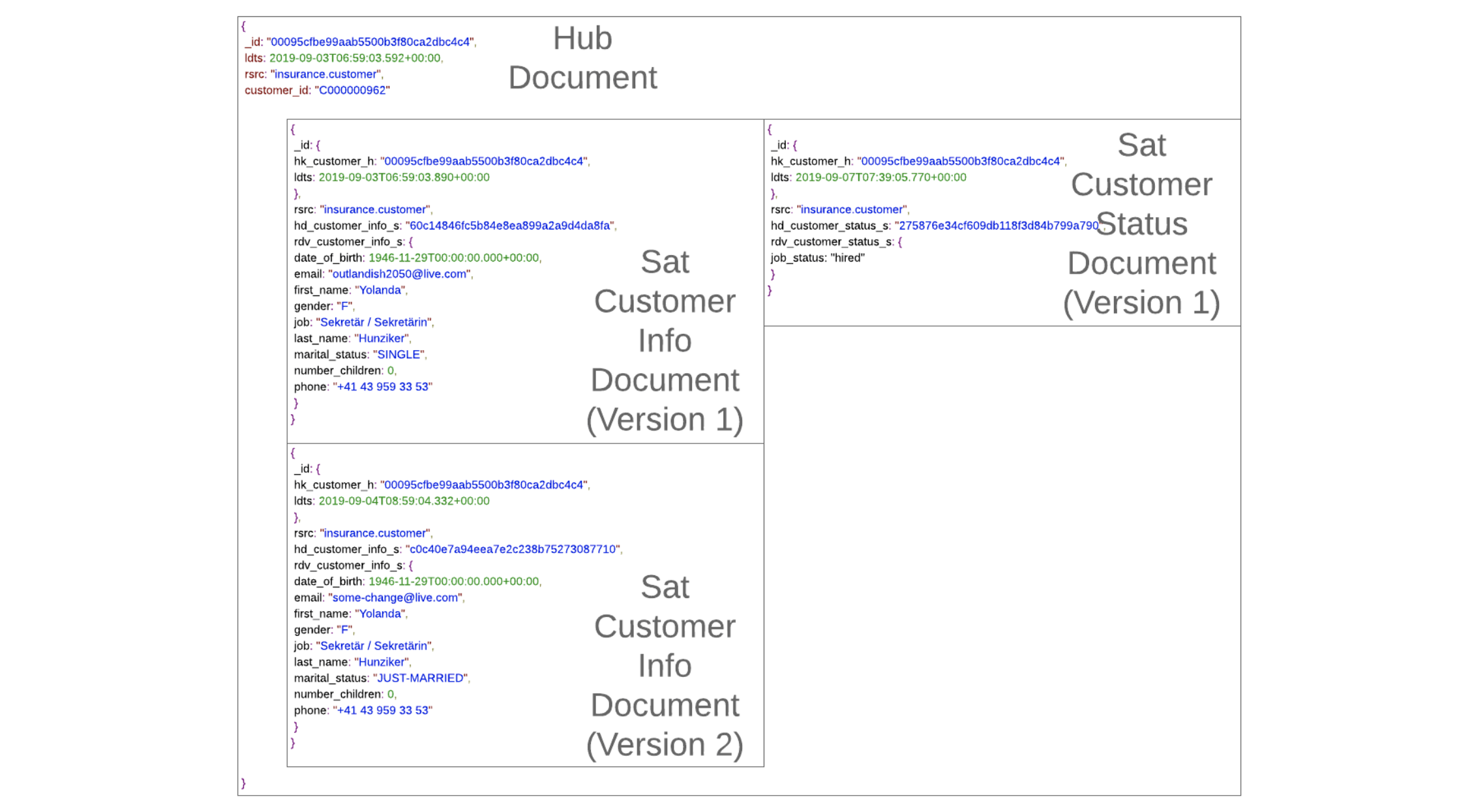

If a new Satellite is created, the end result will be two new columns in the related PIT table (Hash Key + LDTS).

Closing a satellite and creating a new one is also applicable if there are major changes in the source system, for example a new release version of the source system where columns are deleted, renamed and created. In the instance of small changes, especially when columns disappear, we recommend altering the satellite.

Creating a Virtual Dimension table from a PIT table having multiple satellites

When a new satellite for the new attribute, or attributes, is created by not modifying the existing satellite, a new virtual dimension is required to fetch information from PIT tables using both the satellites accordingly based on the required timestamp.

There are two approaches on how the information can be drawn using both the satellites:

- The first approach uses a computed Satellite, in which you combine all satellites with the most recent record per Hash Key and the same structure. Though, this might be a complicated query as it depends upon the amount of data and the number of Satellites to join.

- The second approach is to use a PIT table for all satellites and when querying the data out, for a dimension table for example, you take the record from the leading one, for example using an IIF statement or COALESCE function.

Conclusion

While every situation does require an approach that takes into account the individual nature of the task, the above solutions have proven themselves to be vital when we implement them within our own projects.

We offer these as a way of allowing others to benefit from what our testing, application, and implementation have taught us.