Validation of Relationships in Data Vault 2.0

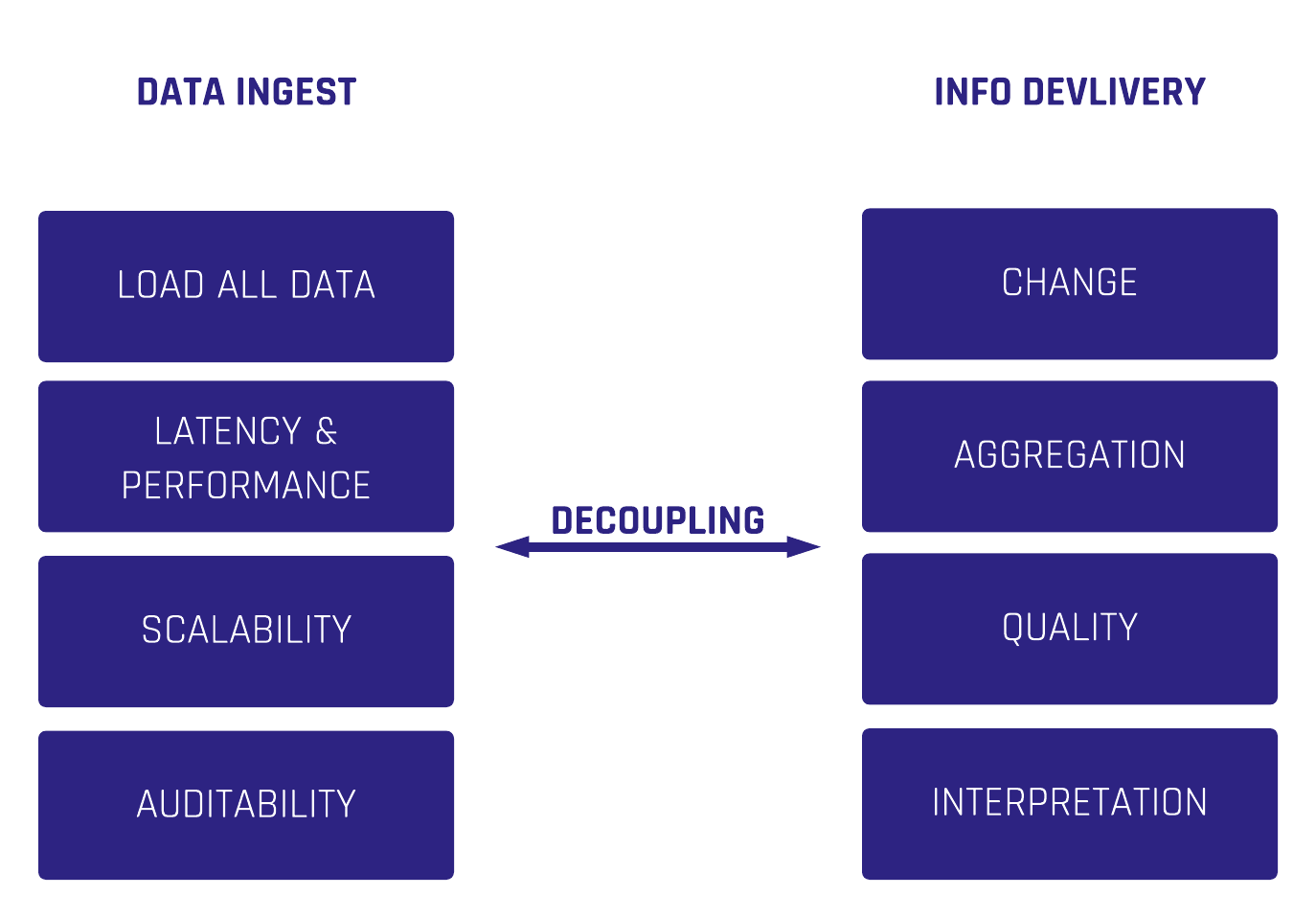

There are different ways of handling validation of relationships from source systems depending on how the data is delivered, (full-extract or CDC), and the way a delete is delivered by the source system, such as a soft delete or hard delete. In Data Vault 2.0, we differentiate data by keys, relationships, and descriptions.

That said, an often underestimated point is the handling and the validation of relationships in Data Vault 2.0.

In the following blog article, we explain what to consider and how to deal with it.

Deletes in Data Vault 2.0

First, let us explain the different kinds of deletes in source systems:

- Hard delete – A record is hard deleted in the source system and no longer appears in the system.

- Soft delete – The deleted record still exists in the source systems database and is flagged as deleted.

Secondly, let’s explore how we find the data in the staging area:

- Full extract – This can be the current status of the source system or a delta/incremental extract.

- CDC (Change Data Capture) – Only new, updated, or deleted records to load data in an incremental/delta way.

To keep the following explanation as simple as possible, our assumption is that we want to mark relationships as deleted as soon as we get the deleted information, even if there is no audit trail from the source system (data aging is another topic).

Delete Detection and Validation of Relationships in Data Vault 2.0

Delete detection for business keys, or Hubs, is straightforward. Soft deletes are handled as descriptive attributes in the Satellite directly and do not take into account whether the data arrives from a full extract or CDC. For hard deletes in the source system, we have to distinguish between full-extract and CDC.

Here we introduce the Effectivity Satellite. In the case of:

- Full-extract – Perform a lookup back into the staging area to check whether the business key still exists. If not, add a record with the deleted information (i.e. a flag and a date) into the Effectivity Satellite.

- CDC – We receive “Delete” information which is a new entry in the Effectivity Satellite.

Delete detection of relationships needs a bit more attention and is often forgotten. With a full extract, we can follow the same approach as followed for business keys: Just check whether or not the Link Hash Key exists in the current staging load and insert a new entry accordingly into the Effectivity Satellite.

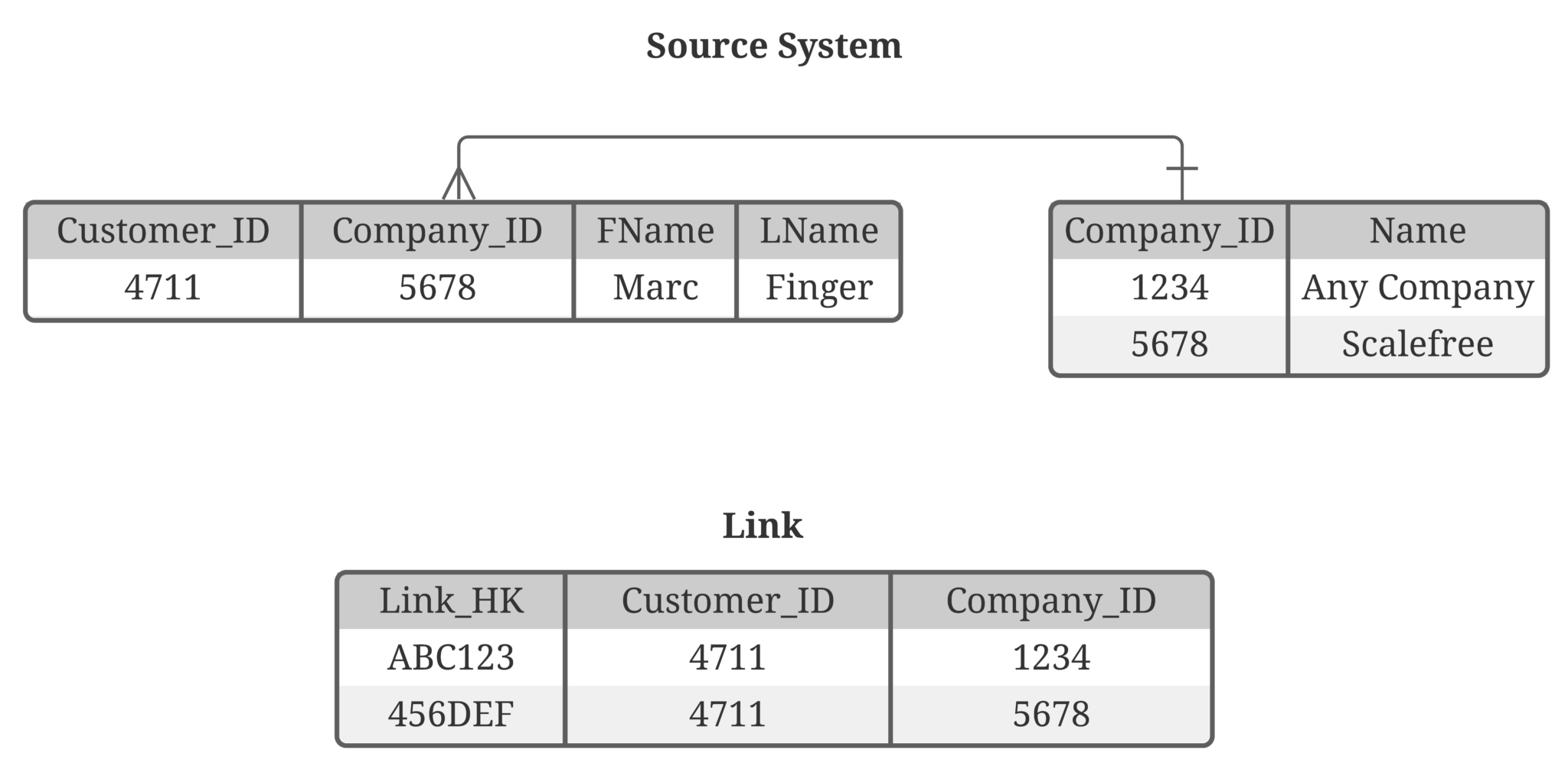

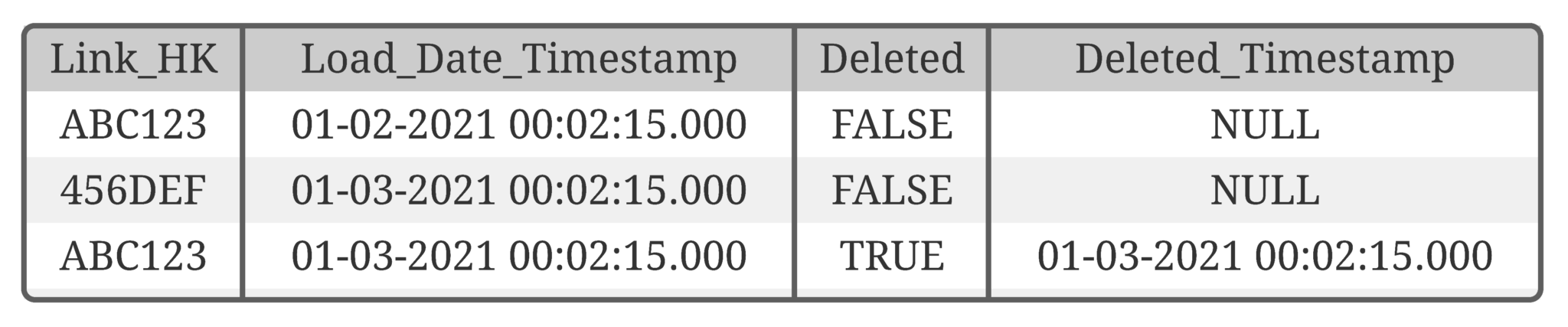

But nowadays, CDC is becoming more common. Though, as CDC delivers deltas only, the challenge now is to identify relationships that no longer exist. The example below shows a relationship between the business objects customer and company. This is a 1:n relationship: